Table of Contents¶

@[toc]

Introduction¶

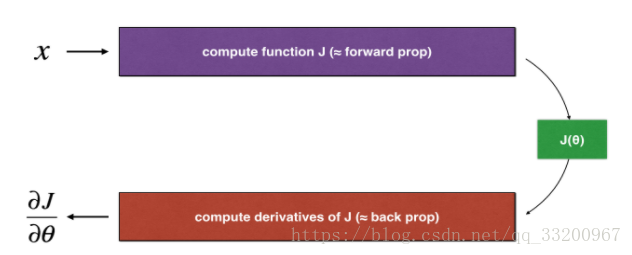

Backpropagation computes the gradient \(\frac{\partial J}{\partial \theta}\), where \(\theta\) represents the model parameters. \(J\) is calculated using forward propagation and a loss function. The formula is:

$$ \frac{\partial J}{\partial \theta} = \lim_{\varepsilon \to 0} \frac{J(\theta + \varepsilon) - J(\theta - \varepsilon)}{2 \varepsilon} \tag{1}$$

Since forward propagation is relatively easy to implement and verify, we first ensure \(J\) is computed correctly. This allows us to validate the gradient calculation \(\frac{\partial J}{\partial \theta}\) using the numerical approximation method.

1D Gradient Check¶

For a linear function \(J(\theta) = \theta x\), the model has one real-valued parameter \(\theta\) with input \(x\).

The diagram shows key steps: forward propagation (calculating \(J\)) and backward propagation (calculating \(\frac{\partial J}{\partial \theta}\)).

Import Dependencies¶

First, import required libraries. Some utilities can be downloaded from here.

# coding=utf-8

from testCases import *

from gc_utils import sigmoid, relu, dictionary_to_vector, vector_to_dictionary, gradients_to_vector

Forward Propagation¶

Linear forward propagation function:

def forward_propagation(x, theta):

"""

Implements forward propagation for J(theta) = theta * x

Arguments:

x -- input value (scalar)

theta -- parameter (scalar)

Returns:

J -- value of function J

"""

J = theta * x

return J

Backward Propagation¶

Linear backward propagation function:

def backward_propagation(x, theta):

"""

Computes the derivative of J with respect to theta

Arguments:

x -- input value (scalar)

theta -- parameter (scalar)

Returns:

dtheta -- gradient of J with respect to theta

"""

dtheta = x

return dtheta

Gradient Check Execution¶

To check the gradient:

1. Compute \(\theta^{+} = \theta + \varepsilon\) and \(\theta^{-} = \theta - \varepsilon\)

2. Calculate \(J^{+} = J(\theta^{+})\) and \(J^{-} = J(\theta^{-})\)

3. Approximate gradient: \(gradapprox = \frac{J^{+} - J^{-}}{2\varepsilon}\)

4. Compare with backward propagation result using:

$$ difference = \frac{|\text{grad} - \text{gradapprox}|_2}{|\text{grad}|_2 + |\text{gradapprox}|_2} \tag{2}$$

def gradient_check(x, theta, epsilon=1e-7):

"""

Implements gradient checking

Arguments:

x -- input value (scalar)

theta -- parameter (scalar)

epsilon -- small shift to compute approximated gradient

Returns:

difference -- difference between grad and gradapprox

"""

thetaplus = theta + epsilon # Step 1

thetaminus = theta - epsilon # Step 2

J_plus = thetaplus * x # Step 3

J_minus = thetaminus * x # Step 4

gradapprox = (J_plus - J_minus) / (2 * epsilon) # Step 5

grad = backward_propagation(x, theta) # Get backward propagation gradient

numerator = np.linalg.norm(grad - gradapprox) # Step 1'

denominator = np.linalg.norm(grad) + np.linalg.norm(gradapprox) # Step 2'

difference = numerator / denominator # Step 3'

if difference < 1e-7:

print("Gradient is correct!")

else:

print("Gradient is incorrect!")

return difference

if __name__ == "__main__":

x, theta = 2, 4

difference = gradient_check(x, theta)

print("difference = " + str(difference))

Output:

Gradient is correct!

difference = 2.91933588329e-10

Multi-dimensional Gradient Check¶

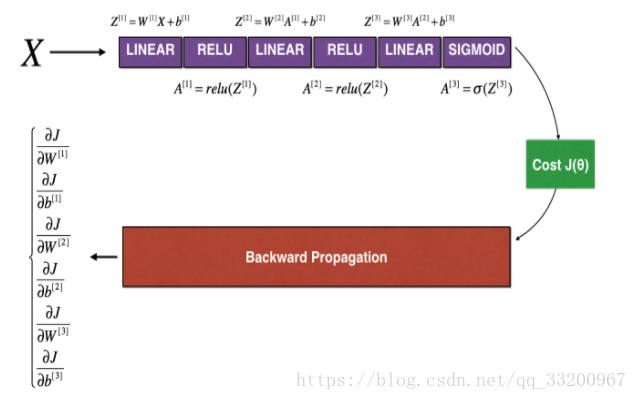

For multi-dimensional parameters, we extend the gradient check to parameter dictionaries.

LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOID

Forward Propagation¶

Multi-dimensional forward propagation:

def forward_propagation_n(X, Y, parameters):

"""

Implements forward propagation (and computes cost)

Arguments:

X -- training set, shape (input size, m)

Y -- labels, shape (1, m)

parameters -- dictionary of parameters (W1, b1, W2, b2, W3, b3)

Returns:

cost -- computed cost

cache -- intermediate values for backward propagation

"""

m = X.shape[1]

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

W3 = parameters["W3"]

b3 = parameters["b3"]

Z1 = np.dot(W1, X) + b1

A1 = relu(Z1)

Z2 = np.dot(W2, A1) + b2

A2 = relu(Z2)

Z3 = np.dot(W3, A2) + b3

A3 = sigmoid(Z3)

logprobs = np.multiply(-np.log(A3), Y) + np.multiply(-np.log(1 - A3), 1 - Y)

cost = 1. / m * np.sum(logprobs)

cache = (Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3)

return cost, cache

Backward Propagation¶

Multi-dimensional backward propagation:

def backward_propagation_n(X, Y, cache):

"""

Implements backward propagation

Arguments:

X -- input data

Y -- true labels

cache -- output from forward_propagation_n

Returns:

gradients -- dictionary of gradients

"""

m = X.shape[1]

(Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cache

dZ3 = A3 - Y

dW3 = 1. / m * np.dot(dZ3, A2.T)

db3 = 1. / m * np.sum(dZ3, axis=1, keepdims=True)

dA2 = np.dot(W3.T, dZ3)

dZ2 = np.multiply(dA2, np.int64(A2 > 0))

dW2 = 1. / m * np.dot(dZ2, A1.T) * 2 # ERROR: Should be 1.0

db2 = 1. / m * np.sum(dZ2, axis=1, keepdims=True)

dA1 = np.dot(W2.T, dZ2)

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = 1. / m * np.dot(dZ1, X.T)

db1 = 4. / m * np.sum(dZ1, axis=1, keepdims=True) # ERROR: Should be 1.0

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,

"dA2": dA2, "dZ2": dZ2, "dW2": dW2, "db2": db2,

"dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradients

Multi-dimensional Gradient Check¶

Iterate over each parameter to compute approximation:

def gradient_check_n(parameters, gradients, X, Y, epsilon=1e-7):

"""

Checks if backward_propagation_n computes gradients correctly

Arguments:

parameters -- dictionary of parameters

gradients -- gradients from backward_propagation_n

X -- input data

Y -- labels

epsilon -- small shift to compute approximated gradient

Returns:

difference -- difference between grad and gradapprox

"""

parameters_values, _ = dictionary_to_vector(parameters)

grad = gradients_to_vector(gradients)

num_parameters = parameters_values.shape[0]

J_plus = np.zeros((num_parameters, 1))

J_minus = np.zeros((num_parameters, 1))

gradapprox = np.zeros((num_parameters, 1))

for i in range(num_parameters):

thetaplus = np.copy(parameters_values)

thetaplus[i][0] += epsilon

J_plus[i], _ = forward_propagation_n(X, Y, vector_to_dictionary(thetaplus))

thetaminus = np.copy(parameters_values)

thetaminus[i][0] -= epsilon

J_minus[i], _ = forward_propagation_n(X, Y, vector_to_dictionary(thetaminus))

gradapprox[i] = (J_plus[i] - J_minus[i]) / (2 * epsilon)

numerator = np.linalg.norm(grad - gradapprox)

denominator = np.linalg.norm(grad) + np.linalg.norm(gradapprox)

difference = numerator / denominator

if difference > 2e-7:

print("\033[93m" + "Backpropagation has errors! difference = " + str(difference) + "\033[0m")

else:

print("\033[92m" + "Backpropagation is correct! difference = " + str(difference) + "\033[0m")

return difference

if __name__ == "__main__":

X, Y, parameters = gradient_check_n_test_case()

cost, cache = forward_propagation_n(X, Y, parameters)

gradients = backward_propagation_n(X, Y, cache)

difference = gradient_check_n(parameters, gradients, X, Y)

Initial Output (with errors):

Backpropagation has errors! difference = 0.285093156781

After fixing errors in dW2 and db1:

dW2 = 1. / m * np.dot(dZ2, A1.T) # Corrected: 1.0 instead of 2

db1 = 1. / m * np.sum(dZ1, axis=1, keepdims=True) # Corrected: 1.0 instead of 4

Corrected Output:

Backpropagation is correct! difference = 1.18904178766e-07

References¶

- http://deeplearning.ai/

This note is based on Andrew Ng’s course. For beginners, feel free to correct any misunderstandings!